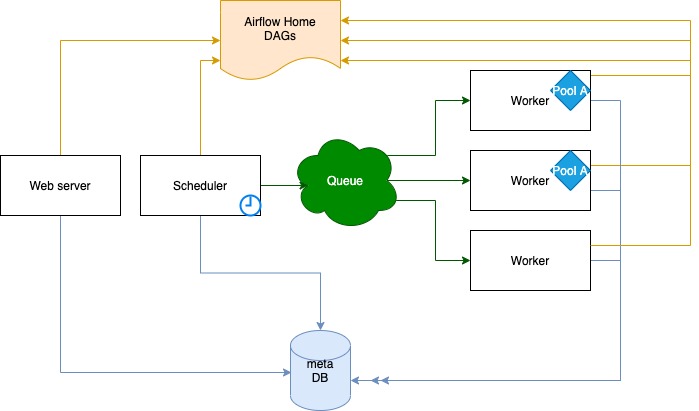

If you don't set the environment variable AIRFLOW_HOME, Airflow will create the directory ~/airflow/ to put its files in. This directory will be used after your first Airflow command. You'll probably want to back it up as this database stores the state of everything related to Airflow.Īirflow will use the directory set in the environment variable AIRFLOW_HOME to store its configuration and our SQlite database. In a production setting you'll probably be using something like MySQL or PostgreSQL. The default database is a SQLite database, which is fine for this tutorial.

Once the database is set up, Airflow's UI can be accessed by running a web server and workflows can be started. The database contains information about historical & running workflows, connections to external data sources, Run Airflowīefore you can use Airflow you have to initialize its database. Similarly, when running into HiveOperator errors, do a pip install apache-airflow and make sure you can use Hive. PostgreSQL when installing extra Airflow packages, make sure the database is installed do a brew install postgresql or apt-get install postgresql before the pip install apache-airflow. You may run into problems if you don't have the right binaries or Python packages installed for certain backends or operators. Leaving out the prefix apache- will install an old version of Airflow next to your current version, leading to a world of hurt. use pip install apache-airflow if you've installed apache-airflow and do not use pip install airflow.

Make sure that you install any extra packages with the right Python package: e.g. Airflow used to be packaged as airflow but is packaged as apache-airflow since version 1.8.1.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed